(Image -- courtesy of TBA -- has nothing to do with this production, but at least is a place where I have worked.)

Technically the show has many challenges. I'm still struggling to define the "sound" for the show, which is being unveiled only slowly as we finish solving issues of monitors, band balance, off-stage singer placement, and RF interference. I'll get into those, and lessons learned: but probably in another post. For the moment I'll say only that this company, like many theater companies, has trouble accommodating the "music" part of "musical." Music is constrained and degraded by choices across the productions, from poor pit placement to limited rehearsal time.

(And at this company in particular, FOH is thought of as a trade, not an art. It is considered as something that could be done by the numbers by anyone with nimble fingers and sufficiently detailed notes from the director. The kinds of real-time artistic choice (and compromise) you have to make whilst flying the desk in front of an audience...well, even conceiving the world in which this is part of the job seems beyond their reach.)

On the Effects Design side, as a passing note this is the most synth-free show I've done yet. The only sound of purely electronic origin that appears is the "sound" of the hot desert sun just before "Timbuktu Delirium." All the magical spot effects are instrumental samples (and a wind sample); rainstick, bamboo rattle...and an mbira brought back from Tanzania and played by my own clumsy thumbs.

But it is specifically effects design I am thinking hard about right now. I want to split the position again. I did three or four seasons with a co-designer; I engineered and set the "sound" of the show, he designed -- created and spotted and fine-tuned -- the effects.

I enjoy creating sounds. I enjoy it very much, and it is one of the things that brought me into theater in the first place. But I have some minor skill as a sound engineer and FOH, and that is a rarer skill in this environment. We can find someone else to create sound effects easier than we can find someone else to engineer and mix the show.

(Actually, I think it might be best for this particular company if I left completely. Because maybe someone younger and better able to express themselves would be able to break down some of the institutional barriers and move sound in that theater up to the next level.)

(The risk, as in many such technical artistic fields, is that it would be just as likely for them to find someone without the appropriate skills, and for sound to suck in such a way that it drives audiences away and drives talent away without anyone involved being able to specifically articulate that it is because the sound sucks.)

(There's a common argument made; that some elements of technical art -- color balance in lighting, system EQ in sound, period accuracy in architectural details -- are, "Stuff only you experts notice." That most of the audience will be just as happy with crap or wrong crap. I strenuously disagree.)

(If you put a dress of the wrong period on stage, no audience member will leap to their feat and say, "That bustle is 1889, not 1848!" But they will have -- even a majority of the audience will have -- a slight uncomfortable feeling, an itch they can't scratch, a strange sound coming from an empty room; a sense that Something is Not Right. And it will make their experience less than it could be. They may never write on the back of the feedback card, "The reverb tails were too long and disrupted some of the harmonies," but they will write things like, "The music could have been better.")

(Many audience members, and a disheartening number of production staff and management, have no idea of 9/10ths of what my board does. But when it all works correctly, the tech-weenie stuff us FOH talk between each other our own indecipherable tongue brings out results that are easy to put in plain language and easily heard by most ears; sound that is "pleasing, well-balanced, audible, clean dialog, exciting, full, etc. etc.")

But back to the subject.

Thing is, on a straight play the Sound Designer is almost entirely concerned with Effects. They can sit in rehearsal with a notebook, spotting sounds and transitions and underscores, taking timing notes, even recording bits of dialog or action in order to time the effect properly. During tech, they are out in the audience area where they can hear how the sound plays; and relay those discoveries back to the electricians and board operators as to volume and placement.

On a musical, you are also trying to deal with the band, their reinforcement, monitor needs for band and actors, and of course those dratted wireless microphones. And far too many of the effects are going to happen when there are already a dozen things happening that demand your attention.

In my current house, two other factors make the job nearly impossible. The first is that due to budget we have carved down from up to four people on the job (Mic Wrangler, Board Mixer, Designer, and Sound Assistant -- during the load-in only), to....one.

I am repairing the microphones, personally taping them on actors and running fresh batteries back stage, installing all of the speakers and microphones and other gear, tuning the house system, helping the band set up, mixing the band, mixing the actors...and also all the stuff that has to do with effects.

The other factor at the current house is short tech weeks and a very....er...flexible...approach to creativity. We feel it is important to celebrate and sustain all those flashes of inspiration that come even in the middle of a busy tech with only hours left before the first audience arrives.

In other houses, we go into lock down earlier. Only when an idea is clearly not working do we stop and swap out -- and even then, it is understood by all parties that this will have a serious impact on every department and thus is not undertaken lightly.

When scenes are being re-blocked up to minutes before the doors open for the opening night audience, the idea of being able to set an effect early in tech, stick it in the book, and not have to come back to it, well...

This can be done. I built my first shows on reel-to-reel decks, bouncing tracks multiple times to build up layered effects. Modern technology means we can be very, very nimble. But it is getting increasingly difficult to be this nimble on top the musical needs of the show. This is why I want to split the job.

Two of the technical tools I've been relying on more of late are within QLab. One is the ability to assign hot keys. This way, even if I've incorrectly spotted the places a recurrent effect has to happen, I can still catch it on the fly by hitting the appropriate key on the laptop during performance.

The other is groups and sound groups.

Most effects you create will have multiple layers. Something as simple as a gunshot will be "sweetened" with something for a beefier impact (a kick drum sample works nice for some), and a bit of a reverb tail.

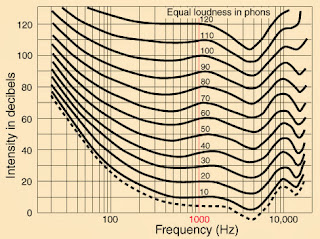

Most effects you create will have multiple layers. Something as simple as a gunshot will be "sweetened" with something for a beefier impact (a kick drum sample works nice for some), and a bit of a reverb tail.But just as relative frequency sensitivity is dependent on volume, dynamic resolution is also volume-dependent. And with the human ear, these are time-mapped and spacial-mapped as well; the ear that has most recently heard a loud, high-pitched sound will hear the next sound that comes along as low and muffled.

Which means that no matter how much time you spend in studio, or even in the theater during tech setting the relative levels of the different elements of your compound sound, when you hit the full production with amplified singers and pit and the shuffling of actor feet (and the not-inconsiderable effect of meat-in-seats when the preview audience is added to the mix!) your balance will fall apart. The gun will sound like it is all drum. Or too thin. Or like it was fired in a cave. Or completely dry.

So instead, you split out the layers of the sound as stems and play them all together in QLab. Grouped like this, a single fade or stop cue will work on all the cues within the group. And you can adjust the level of the different elements without having to go back into ProTools (or whatever the DAW of your choice is).

This also of course gives flexibility for those inevitable last-minute Director's notes ("Could the sound be two seconds shorter, and is that a dog barking? I don't like the dog.")

(How I construct these, is first I get the complete sound to sound right, preferably on the actual speakers. Then I selectively mute groups of tracks and render each stem individually. Placed in a group and played back together, the original mix is reconstructed. The art comes, of course, in figuring which elements need to have discrete stems!)

(QLab will play "close enough" to synchronized when the files are short enough. For longer files, consider rendering as multi-channel WAV files and adjusting the playback volumes on a per-channel basis instead. I tried this out during "Suessical!" but it didn't seem worth the bother for most effects.)

Thing of it is, though...

My conception of sound design is one of total design. Even though it takes the pressure off to have a sound assistant running sound cues (or on simpler shows, the Stage Manager), I consider it part of the total sound picture of the show. To me, the background winds in this show are as much a part of the musical mix as is the high-hat. As a minimum, I'm tracking effects through the board so I have moment-by-moment control of their level and how they sit in the final mix. It is sort of a poor man's, done-live rendition of the dubbing mix stage of a movie.

The avenue that continues to beckon has been the idea that, somehow, I could do the bulk of the work offline and before we hit the hectic days of tech. This has always before foundered on not having the props, the blocking, or any of the parts I usually depend on to tell me what a sound should actually be (and to discover the all-important timing.)

Since this won't move, it is possible that what I have to explore is more generic kinds of sounds. I've been using QLab and other forms of non-linear playback for a while to make it possible to "breathe" the timing. Perhaps I can explore more taking development out of the effects and building them instead into the playback.

Except, of course, that is largely just moving the problem; creating a situation where I need the time to note and adjust the cue'ing of build sound effects, as opposed to doing the same adjustment on the audio files themselves. And in the pressure of a show like the one I just opened, I don't even have the opportunity to scribble a note about a necessary change in timing or volume!

And the more the "beats" of an effect sequence are manually triggered, the more I need that second operator in order to work them whilst still mixing the rest of the show. There's one sequence in this show -- the nearly-exploding boiler -- that has eight cues playing in a little over one script page. There are already several moments in this show where I simply can not reach the "go" button for a sound cue at the same time I need to bring up the chorus mics.

Perhaps the best avenue to explore, then, is generic cues; sounds so blah and non-specific they can be played at the wrong times and the wrong volumes without hurting the show! Which is the best argument for synth-based sounds I know...

(The other alternative is to make it the band's problem. But they are already juggling multiple keyboards and percussion toys and several laptop computers of their own and even a full pedal-board and unless it appears in the score, they are not going to do a sound effect!)

Know how you feel Mike. I'm roughly 15 minutes away from Theatreworks, perhaps grab some lunch and share some war stories.

ReplyDelete